Learning Human-to-Robot Dexterous Handovers for Anthropomorphic Hand

Abstract

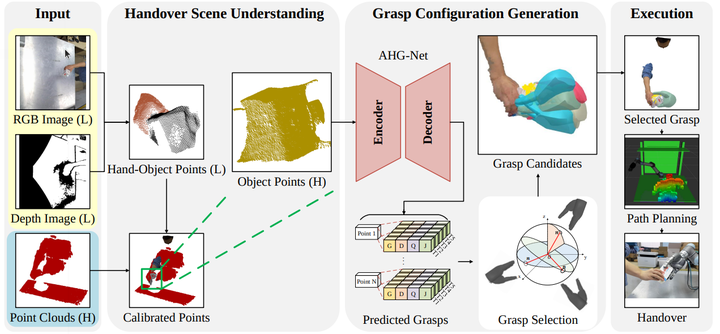

Human-robot interaction plays an important role in robots serving human production and life. Object handover between humans and robotics is one of the fundamental problems of human-robot interaction. The majority of current work uses parallel-jaw grippers as the end-effector device, which limits the ability of the robot to grab miscellaneous objects from human and manipulate them subsequently. In this paper, we present a framework for human-to-robot dexterous handover using an anthropomorphic hand. The framework takes images captured by two cameras to complete handover scene understanding, grasp configurations prediction, and handover execution. To enable the robot to generalize to diverse delivered objects with miscellaneous shapes and sizes, we propose an anthropomorphic hand grasp network (AHG-Net), an end-to-end network that takes the singleview point clouds of the object as input and predicts the suitable anthropomorphic hand configurations with 5 different grasp taxonomies. To train our model, we build a large-scale dataset with 1M hand grasp annotations from 5K single-view point clouds of 200 objects. We implement a handover system using a UR5 robot arm and HIT-DLR II anthropomorphic robot hand based on our presented framework, which can not only adapt to different human givers but generalize to diverse novel objects with various shapes and sizes. The generalizability, reliability, and robustness of our method are demonstrated on 15 different novel objects with arbitrary handover poses from frontal and lateral positions, a system ablation study, a grasp planner comparison, and a user study on 6 participants delivering 15 objects from two benchmark sets.